Download catalogue of technologies

Texterra: a semantic analyzer

Texterra — is a scalable platform for extracting semantics from text. It contains the complete fundamental set of technologies for creating multifunctional applications for text analysis. Texterra bases its semantic analysis approach on concept identification. The platform is included in the Unified Register of Russian Programs (No.4048).

Features and advantages

Texterra performs a unique analysis of Russian texts based on the identification of concepts instead of just words. It differs from foreign competitors by paying the most attention to Russian language. The analyzer builds on fundamental research results and integrates with the Elasticsearch search system greatly expanding its capabilities. The successful combination of technologies allows the platform to compete with the projects similar to IBM Watson Natural Language Understanding.

Texterra provides:

- High text processing speed (morphological analysis: 69 000 words per second, syntactic analysis: 39 100 words per second, coreference resolution: 10 100 words per second, full text analysis: approximately 13 600 words per second).

- Maximum attention to Russian language (unlike similar spaCy and UDPipe projects, as well as IBM Watson Natural Language Understanding, which does not support the analysis of emotions and concepts in Russian texts).

- Large knowledge base (more than 7 million concepts).

- Building knowledge base without expert involvement (automatic construction and update using Wikipedia, MediaWiki, Linked Open Data, etc.).

- Scalability both in word processing speed and in knowledge base size (using Apache Ignite and the Asperitas cloud technology developed at ISP RAS).

- High text analysis accuracy due to a number of key features:

- multi-level search by related concepts;

- adaptability to slang, hashtags (#) and errors in text;

- emotion analysis (with separation of attitude towards objects and their attributes);

- determining relationships between people and companies based on text information;

- detecting implicit object references in discussions.

- Fast adaptation and tailored solutions development.

- Supporting two use cases:

- as a deployed software system on a customer’s local server providing either HTTP REST-based or RMI protocol access;

- online at https://texterra.ispras.ru/;

- Simple and fast support for specific domains and ability to integrate new languages backed up by a modern machine learning approach.

Who is Texterra target audience?

- Corporate software developers (e.g. chat bot developers).

- Developers of semantic search systems for specific domains (such as information security, medicine, auditing, etc.).

- Developers of arbitrary text processing applications.

Texterra deployment stories

Texterra has been productized in the joint projects with HP and Samsung (the project goals were to develop a technology for analyzing corporate reports or supporting smart TVs). Currently Texterra backs up several ISP RAS innovative products, e.g. Talisman social media analysis. A number of Russian government agencies also use Texterra.

Supported languages

Texterra analyzes Russian and English texts.

System requirements

- A system supported by Java 8.

- 16 Gb RAM or more for each supported language.

- 64-bit operating system is recommended.

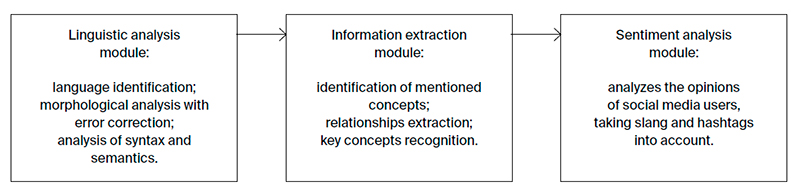

Texterra workflow

Projects

Developer/Participant

Back to the list of technologies of ISP RAS