News

About Information Systems Group

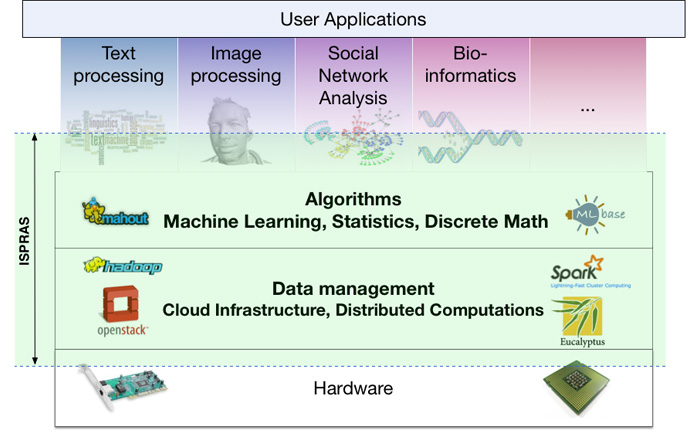

The research group specializes in a broad range of data management and information systems problems. Interests of Information systems groups include the development of system software for data analysis, information extraction, database management systems, distributed data processing and cloud computing technology. In addition, research group is developing algorithms for statistical data analysis and machine learning, as well as software for particular problems in applied fields. We are working on development of software for intelligent text analysis, social network analysis, bioinformatics tasks and multimedia processing.

The main directions of the research group is development of software for processing of natural language, social network analysis and systems analysis and processing large amounts of data.

Natural language processing and text mining

Natural language processing (Natural Language Processing, NLP) is a research field at the intersection of artificial intelligence and linguistics that studies the problems of computational analysis and synthesis of speech and texts in natural languages. NLP history started simultaneously with the appearance of the first computers and currently shows another rise caused by the explosive growth of computing power and available textual information in the form of "raw" data of the web, and marked resources, such as Wikipedia and Freebase.

Scientific interests of the Information systems group are closest to the following areas:

- Semantic analysis of texts, including semantic annotation, word sense disambiguation, key concepts extraction, automatic extraction of knowledge bases.

- Information retrieval, including semantic search and exploratory search.

- Information extraction, including named entity recognition, term extraction, coreference resolution.

- Sentiment analysis and opinion mining.

For our research we mostly use a combination of statistical (usually based on machine learning) and linguistic methods. Given the nature of modern text and speech data are also used methods of related areas, including social media analysis and data management.

Social network analysis

Social network analysis is a direction of modern computer sociology that deals with description and analysis of social interaction and communication networks. The study of online social networks (Vkontakte, Facebook, Twitter, YouTube, etc.), which has now become an integral part of the Internet, is one of most interesting directions. Modern social networks combine different types of nodes and edges, as well as a variety of sources of text, graph, multimedia and other types of user data.

Our team is developing a technology stack designed for the analysis of user data from social networks, whose main components include original methods for

- Exploring implicit user communities based on social relations.

- User identity resolution for users of different social networks: search for various virtual personalities of the same user in multiple social networks.

- Identifying the demographic attributes of users (gender, age, religious and political beliefs, marital status and level of education) with the aid of linguistic analysis of their messages.

- Measuring the influence of users in social networks based on social connections and textual content.

- Generating large random graphs with properties of social networks and given structure of user communities. Generating profile attributes, social relationships, communities, and text messages for users.

- Collection of user data from social services.

Our technologies are based on machine learning methods, probabilistic modeling, graph algorithms, methods for natural language processing, as well as modern technologies for distributed analysis of big data. Most methods combine analysis of network data (social connections between users) and text data (messages and user profiles).

Infrastructure for analyzing and processing of Bid Data

One of the challenges facing humanity is development of effective methods for storing, processing and analysis of the rapidly growing volume of data (Big Data). For example, Facebook users upload 83 million images daily, that's 200-400 TB; Google handles more than 25 petabytes per day. The total amount of data doubles every eighteen months. The data come from different sources, don't share common scheme and are not syntactically and semantically consistent.

As consequence method for working with data changed during the past decade. In contrast to the last century, when the data were self-worth and often was classified, now most of the data available to everyone. And those organizations have advantage that have learned how to maximize value of data by extracting high-quality and timely information.

Information systems group is developing tools for big data processing based on Apache open technology stack. Central platform in the field of free software for big data management is Apache Hadoop project - free software for reliable, scalable distributed computing. Specialized systems for storing and processing big data are developing in connection with this project . One of the most promising projects is Apache Spark, which allows to significantly accelerate data processing. Employees of the department are actively involved in the development of this project.

Contacts

Denis Turdakov, Ph.D., Head of the Department.

E-mail: turdakov@ispras.ru

Phone: +7(495) 912-56-59 (ext. 461).

Prof. Sergey Kuznetcov, Chief Researcher.

E-mail: kuzloc@ispras.ru

Phone: +7(495) 912-56-59 (ext. 412).